Methods

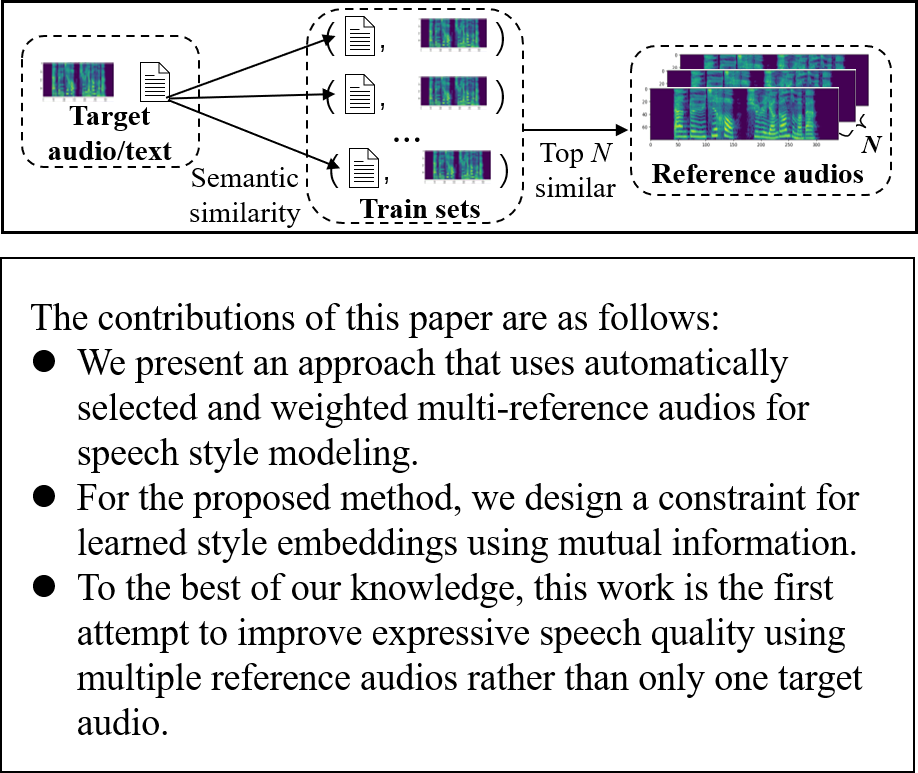

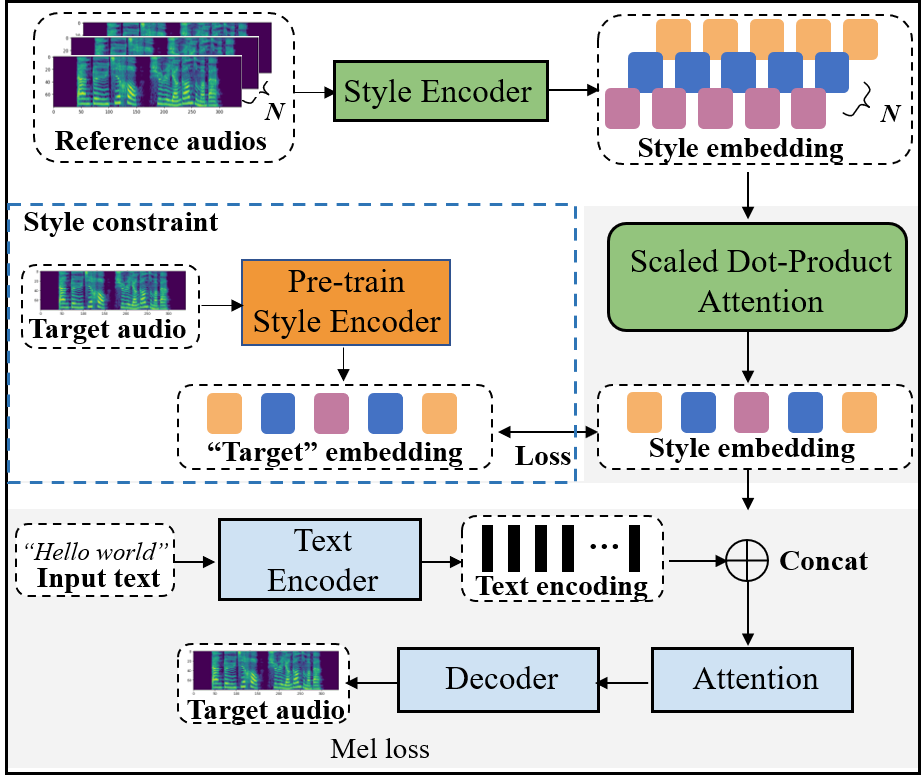

The end-to-end speech synthesis model can directly take an utterance as reference audio, and generate speech from the text with prosody and speaker characteristics similar to the reference audio. However, an appropriate acoustic embedding must be manually selected during inference. Due to the fact that only the matched text and speech are used in the training process, using unmatched text and speech for inference would cause the model to synthesize speech with low content quality. In this study, we propose to mitigate these two problems by using multiple reference audios and style embedding constraints rather than using only the target audio. Multiple reference audios are automatically selected using the sentence similarity determined by Bidirectional Encoder Representations from Transformers (BERT). In addition, we use “target” style embedding from a pre-trained encoder as a constraint by considering the mutual information between the predicted and “target” style embedding. The experimental results show that the proposed model can improve the speech naturalness and content quality with multiple reference audios and can also outperform the baseline model in ABX preference tests of style similarity.

These examples are sampled from the evaluation set for Table 1 in the paper. Each column corresponds to a single text, and each row corresponds to different methods. Our proposed final method at last row, P10, showed the best result from evalutation including MOS and ABX test in terms of the speech quality and style similarity.

| Ground-truth | ||||||

| B1 | ||||||

| B2 | ||||||

| P10 |

| B3 | ||||||

| B4 | ||||||

| P1 | ||||||

| P3 | ||||||

| P4 | ||||||

| P5 | ||||||

| P6 | ||||||

| P7 | ||||||

| P8 |

@misc{gong2021using,

title={Using multiple reference audios and style embedding constraints for speech synthesis},

author={Cheng Gong and Longbiao Wang and Zhenhua Ling and Ju Zhang and Jianwu Dang},

year={2021},

eprint={2110.04451},

archivePrefix={arXiv},

primaryClass={cs.SD}

}